Your IT department might be kind enough to provide you with a centralized development server, which seems nice at first. For some reason this seems to remain a popular setup. Over the years, I've seen various intranet variations of this setup and even setups that have been routed over the public internet to a VPS provider such as DigitalOcean. However, just because you can, doesn't mean you should. Let's look at a number of reasons why this is a bad idea.

1. Modern IDEs perform better on SSDs

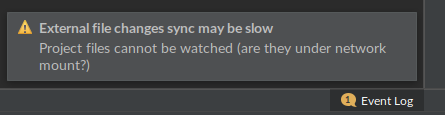

Web applications consist of an increasing number of small files, that modern IDEs need to index. Indexing over a network drive is often painfully slow and watching files for changes is often impossible. For instance, one of the best polyglot IDEs in my opinion, Jetbrains IntelliJ IDEA, helpfully throws the following warning when attempting to load files directly over a network drive, e.g. a SSHFS mount:

Needless to say, triggers on file changes do not work, making your automated build system useless.

2. Logging is slow

Depending on your connection and disk speed, tailing logs may become a pain, when they are not written in a timely fashion on disk.

3. Files may change in memory before written to disk

This is especially infuriating. Patch a piece of code, see your browser reload, and notice nothing changed. One of those "wtf" moments.

4. Broken pipes

Ceci n'est pas une pipe...

Working over a remote connection, especially when combining different technologies such as SSH over VPN, a common scenario in corporate environments, brings its own set of problems. Poor connections and frequent disconnects make programming cumbersome, to say the least.

However, if you really must, autossh and tmux or mosh can be used to mitigate the issue.

5. You always need an internet (or at least intranet) connection

Excited about that business trip over the Atlantic? Finally some time to catch up on that project on your long flight? Think again - without an internet connection you can't access your development environment. Granted, many airlines provide Wifi nowadays, but you still have all of the issues relating to slow connections, firewalls, VPN connectivity etc.

6. Disk is always full

Fact of life: ops always allocates a disk that's too small, since "it's only a development server anyway".

The storage area can fill up quickly already when a few developers debug a crash, storing large core dumps. A coffee-deprived developer might inadvertently create an infinite loop which in turn creates a debug log file a few gigabytes in size, before realizing their mistake.

Once the disk is full, the party is over: development grinds to a halt for everyone, until someone with enough permissions is paged and clears some space or the disk is scaled up. Meanwhile, management wonders why development is always behind schedule.

Autoscaling anyone?

7. Firewall woes

Since your server is accessed over a network, whether it be a public or private network, it needs to be properly firewalled. However, firewall misconfigurations might block access to services such as Xdebug, a problem that would not exist if development would be done locally.

8. Accidental crashes

It happens even to the best of us - you create an infinite loop or a memory hog by accident. If the development would be on your local computer, then the worst case is that you have to force a reboot.

However, do the same on a centralized server and something like this happens; first, wait for SSH to respond. Try to find the hanging process using ps, htop and whatnot. This obviously takes ages, because the server is clogged. Finally, find the process and attempt to kill it, only to find out you don't have permissions to do so. Page the server admin, who is obviously out for lunch/home sick/on vacation at the exact time of this problem. Once they manage to take a look at the issue, the server is so bogged down that you need to reboot it.

Meanwhile, your developer colleagues curse the system for wasting a good bit of their precious time as well, since they can't access the development server while all of this is going on.

Wrong reasons for clinging on to your centralized development environment

1. You want the same environment for all developers

Sure, having a centralized development server means that you can install and configure it "just once", and have all developers use the same environment, seemingly getting rid of problems like "it works on my computer". However, this is a wrong reason to have a centralized server. Simply use modern tools like Docker and Vagrant to provide deterministic environments to your developers that they can deploy locally with minimal effort.

2. Less maintenance

Highly unlikely. Since developers most likely have their own laptops and desktops that need to be managed anyway, you're not going to save any time on hoping that the burden of maintenance is lighter by running a centralized server. In fact, any maintenance carried out on the server during business hours is going to disrupt all of your developers' workflow.

3. Automated backups

You might be worried over losing work, in case of a hard drive meltdown on a developer's computer. Since good version control practices state that commits should be atomic, one should in any case never lose more than half a days work in case of a catastrophic hard drive failure. If your commits are not atomic and your developers don't push commits often enough, you have in fact a workflow issue, not a reason to run a centralized server.

4. You want to mimic the production environment

Not for development, you don't. The purpose of a development environment is to provide the developer with the necessary tools to do his/her job as productively as possible. This means, that the environment should provide debug tools, profilers and other such software, that most definitely should not be installed in the production environment.

What you actually want for testing is a separate staging environment, that mimics the production environment.

5. Better employee monitoring

Thankfully, this isn't 1984. If you're trying to get an overview of what your developers are working on by looking at their development environments, you're going to fail. These environments change frequently and do not always provide a clear picture of what's being worked on. You need other tools like a project management platform and/or a ticketing system like Taiga to manage this.

If you really feel that this is an issue for you, then you're actually trying to solve a management issue using the wrong technological tools.

6. Easier code sharing among developers

No. Just no. That's why you have a version control system in place, that keeps a history and allows for code sharing e.g. using branches. Use it instead of messing around with the file system manually.

7. Fear of source code leaks

A moot point. What's stopping a developer from cloning the repository to their local computer, since they already have access to it on the remote server? If you're worried about this, enforce a policy where full-disk encryption is mandatory for your staff and/or other security measures.

What you should do instead

1. Use the built-in web server

Modern interpreters of PHP and Python have built-in web servers intended specifically for local development. There is no need to mess around with Apache configuration files or iptables. For something more advanced, an application server such as uWSGI is trivial to spin up in a Docker container locally, and does a fantastic job at serving your application and static files in development.

2. Use a CI/CD server

Since the development environment is quite different from the production environment, by design, you'll most likely want to automate testing and deployments by installing a continuous integration server such as Drone. This, on the other hand, is something you'll definitely want to run on a centralized server.

3. Commit the configuration

Once you have a working development setup, commit the configuration for it, e.g. your Webpack configuration files, along with the project's source code. This way all developers use the same configuration and new developers can start working out of the box.

Bonus: give the reload button a well-deserved retirement

Hitting F5 or the reload button of your browser manually is so 90's. Take full advantage of hot module reloading by using e.g. Webpack, which modernizes your front-end development workflow but also proxies nicely to your local backend development server while saving you a bunch of time as well.